In 1996 and 1997, the reigning world chess champion Garry Kasparov played a series of games against Deep Blue, an IBM supercomputer optimised to compete against humans in tournament conditions. Kasparov defeated Deep Blue in the first set of matches, but lost in the second series, prompting worldwide discussion of AI’s growing aptitude at tasks traditionally considered tests of human intelligence.

Kasparov—a remarkable thinker who’s become an important Russian opposition political activist since retiring from competitive chess in 2005—was troubled by his loss to Deep Blue, and accused the IBM team of various forms of cheating during the match.

But instead of withdrawing from “man versus machine” contests, he became a leading proponent. In 1999, he won a match titled “Kasparov versus the World”, in which 50,000 human opponents, coordinated by a computer, played the black pieces while the grandmaster played white. In 2003, he drew a series of six matches with Deep Junior, a successor to Deep Blue.

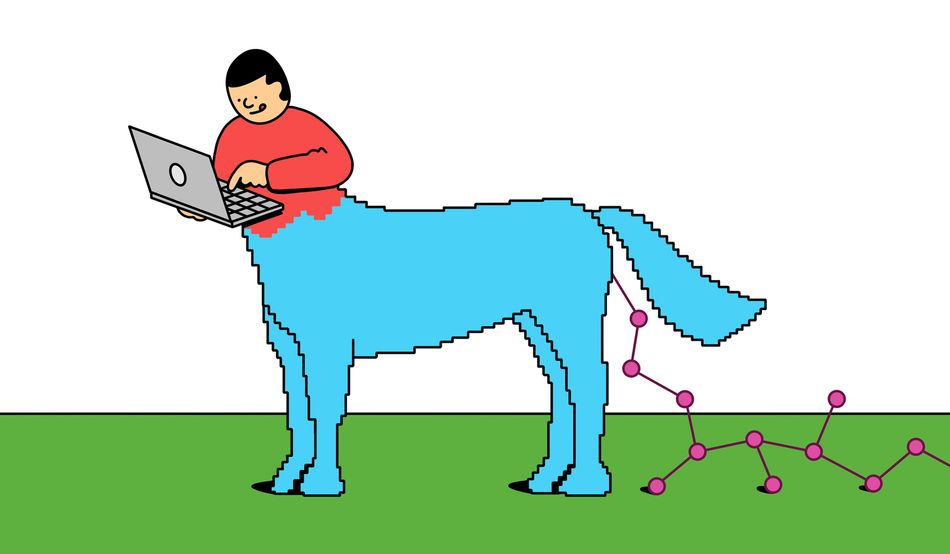

Perhaps his most interesting response to the Deep Blue loss was the invention of a new form of chess that he called “advanced chess” or “centaur chess”—named after the powerful Greek mythological creatures that fuse the torso of a man with the body of a horse. In this game, rival players have access to advanced chess computers that can evaluate far more positions than the top human players.

But the players ultimately decide what strategies to pursue, wielding their chess engines as weapons, rather than competing against them as opponents. Kasparov believed centaur chess would allow for chess at a level that had never been seen before, with blunder-free play alongside all the drama and strategy that humans provide.

Kasparov’s “centaur” concept was taken up by the US military: in 2015 deputy secretary of defence Robert Work began advocating for “centaur warfighting”, in which human judgement combined with the speed of AI systems would allow for unprecedented military success. And the idea of the AI-empowered centaur has become a popular marketing trope, supporting a vision of a tech-enabled future in which employees paired with powerful AIs are hundreds of times more efficient than ordinary humans.

The idea has prompted sharp critiques: Cory Doctorow proposes we understand this new paradigm as including both centaurs—smart humans given powerful computers to handle tedious and repetitive aspects of work—and “reverse-centaurs”, in which machines have human assistants who smooth out their defects and limitations, but who are also required to work at their relentless pace.

Arguably, contemporary AI depends on reverse centaurs—the tens of thousands of workers in developing nations who are paid pennies per task to label images or fine-tune chatbot responses. However we feel about the politics of centaurs, they are increasingly real. And I should know. I work with one.

I have a student who reliably accomplishes 20 times as much work as I have come to expect from a young programmer. We are collaborating on a research project this semester, and I assigned him a task that should have taken a semester’s worth of hard work. One Friday in early February, he asked me numerous questions about the experiment, and came back the following Monday with preliminary results that I was expecting in May.

The people working out how to use AI to become superhuman have two complementary sets of skills. They have very good mental models of the problems they’re solving: in this case, my student is a computational thinker who understands what computers do and how they do it. Using this knowledge, he can break up complex problems into pieces that AIs can solve efficiently.

He also has a finely developed set of skills in getting AIs to do what he wants them to. I am a good computational thinker, but I’m pretty weak at making the AI do what I need. As a result, the systems that make him 20 times faster make me only a little bit faster.

While it’s an incredible luxury to work with a centaur student, as an educator I am deeply concerned. For decades, we’ve trained computational thinkers by teaching them how to code. The long and frustrating process of learning to make a computer do what you want it to do helps many people develop powerful mental models that prepare them to solve problems using computers.

But most of the ways we teach programming are now easily defeated by AI. It’s trivially easy for AIs to complete assignments designed to teach programming. Some students—perhaps lured by the increasingly suspect promise that a computer science (CS) degree leads to guaranteed employment—are short-circuiting the processes we’ve used to train computational thinkers.

Some of my students report that their peers—CS master’s students—cannot write simple programs without AI assistance. And some of my fellow professors are turning to decidedly analogue ways of evaluating student knowledge, including handwritten exams.

When you give powerful AI to people who don’t understand what it’s doing, they can do stupid things very quickly. And even experienced programmers are finding it hard to make productivity gains from AI. One recent study compared the performance of experienced programmers with and without coding aides.

The programmers using coding aides estimated that AI had made them 24 per cent more efficient. Actually, they were 19 per cent less efficient than similar programmers completing the same tasks without AI.

A study of contributions to a popular software archive—GitHub—offers more nuance. The researchers used software to detect AI-authored submissions to the repository, and concluded that 29 per cent of code submitted using the Python programming language was likely AI-generated. The overall pace of submission to the archive is growing at roughly 4 per cent per quarter, suggesting that AI is making coders more productive. But the gains were concentrated among the most experienced coders who had been contributing to the archive for many years. Less experienced coders saw no productivity improvement, and some declined in productivity.

There’s a worrying possibility here: if AI helps experienced coders the most, but access to AI coding tools stops people from learning basic programming skills, might our future lack centaurs because the humans can’t hold up their end of the bargain? People continue to aspire to become chess grandmasters, despite the contemporary chess computers that now trounce the best human players. But it is not clear if a new generation of programmers will learn their art, as their predecessors did, through frustrating repetition, and what we might lose if they don’t go through this hard learning.

There’s a cautionary postscript to my work with the centaur student. We tried to make sense of the amazing results he and the AI produced. After many hours, we decided to examine the raw data, rather than the shiny graphs and summaries the AI created. We discovered that, somewhere, in the many steps of producing data for our experiment, we had overestimated the skills of the AI and it had produced unusable garbage. It had rapidly processed that data into a huge set of impressive-looking results that were, on close analysis, totally meaningless.

As we develop the relationship between humans and AI, we have much to learn about how to evaluate the work produced. My student and I are falling back on a technique introduced into software development about 25 years ago—test-driven development.

Complex code is shown to do what developers believe it does by forcing it to pass hundreds of “unit tests”. If each unit works correctly, and they are connected correctly, there’s good reason to believe the system as a whole will work predictably. We’re doing the same thing now as researchers, making sure each stage of our work passes a test so we can avoid the likely outcome of doing something really dumb, really fast.

Harnessing the power of AI across society is going to require the same sort of rigour, patience and hard-won expertise shown by those developers. Without it, potential centaurs could end up flattening us in a stampede of follies.