When Alvi Choudhury opened his door to two police officers in January, he assumed they wanted footage from his video doorbell. “Hello officers, I’m not in trouble, am I?” he joked. “Alvi, right?” one of them responded. “You’re under arrest.”

Choudhury, a 26-year-old British Asian software engineer from Southampton, was a suspect in a burglary committed in Milton Keynes—a town more than 100 miles away. Initially, he thought the matter would be cleared up quickly; he’d been working at the time of the incident and he’d never even been to Milton Keynes. But the arresting officers from Hampshire Constabulary didn’t want to know. Choudhury was led away in handcuffs. He spent 10 hours locked in a cell while he waited for officers from Thames Valley Police to come and question him.

When the interview finally took place, Choudhury was shown an image of the suspect, another South Asian man. “Does this look anything like me?” he asked, and the officers laughed about the lack of resemblance. Choudhury was eventually released at around 2am. “I assumed this was pure racism,” he says. A week later, after filing a complaint against Thames Valley Police, he found out the full story: he’d been flagged as a possible suspect by an automated facial recognition system.

Thames Valley Police wrote to Choudhury, acknowledging that his arrest “may have been the result of bias within facial recognition technology”—something it knew to be a “wider issue”. Confusingly, however, the force concluded that his arrest was not a case of racial profiling, because officers had made their own assessment of the images before arresting him. Choudhury did not find that particularly reassuring. “In my head, if a brown person in Scotland robs a bank, they are going to be coming to arrest me,” he said.

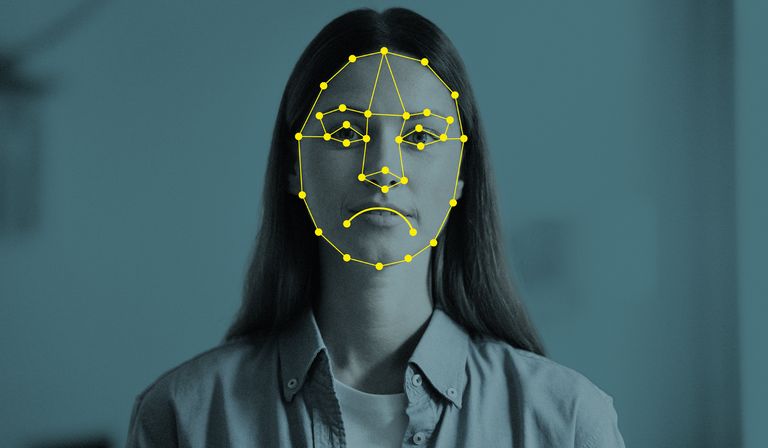

Police in the UK are increasingly likely to look for suspects using facial recognition technology. More than 25,000 “retrospective” facial recognition searches are now made every month using the police national database, comparing images taken from sources such as social media and CCTV to a database of more than 19m custody images, or mugshots. Searches are also run on the passport and immigration databases. The Crime and Policing Bill, currently making its way through parliament, would open up the possibility of searches on the driving licence database as well.

“Live” facial recognition cameras, which scan the faces of passers-by to search for wanted people in real time, now regularly appear on British high streets. Last year, police forces scanned a total of 8.6m faces, up from 4.7m the year before. Several are rolling out handheld facial recognition devices, while senior officers have discussed combining the technology with bodyworn video cameras and drones—though a spokesperson for the National Police Chiefs’ Council (NPCC) said there are currently no plans for this. Police leaders have stressed that the technology will be used responsibly. As it stands, in the absence of any specific laws governing police use of facial recognition, the public is asked to take this on trust.

One month before Choudhury’s arrest, the UK government opened a consultation on legislation to govern police use of facial recognition technology, beyond the current patchwork of data protection and human rights law. The Home Office made its aims clear: “The consultation will pave the way for new laws so all police forces can use this new technology with greater confidence and more often.” Lindsey Chiswick, facial recognition lead at the NPCC, added: “Live facial recognition is already subject to strong safeguards and rigorous oversight, and policing remains committed to using it proportionately and responsibly.”

Other views are available. There have been five non-interim biometrics commissioners—offering independent oversight of police use of DNA, fingerprints and, more recently, facial recognition—since the post was established by the government in 2013. Every one of them has called for stronger regulation of facial recognition. William Webster, currently in post, has described the consultation as a “once-in-a-generation” opportunity to finally get regulation right.

In January 2014, the first commissioner, Alastair MacGregor, heard police were exploring plans to upload custody images to the police national database so they could be searched using facial recognition technology. At the time, MacGregor’s remit extended only to how police used DNA and fingerprints, but he asked to be kept informed. Three months later, at a meeting of police chiefs, he was told that 12m custody images had already been uploaded to the database and that a facial recognition function had gone live five days before. Concerned, he wrote to Durham Constabulary chief constable Mike Barton, who chaired the meeting, diplomatically noting “that difficult legal, political and other problems may well quickly arise”.

MacGregor’s successor, Paul Wiles, warned in 2017 that the varying quality of custody images across forces posed a risk of “false intelligence or wrongful allegations”. Wiles also noted, with some concern, that forces had begun using the technology “to try and identify individuals in public places”.

Leicestershire Police was the first UK force reported to use facial recognition in public, at the 2015 Download music festival. Fourteen months later, the Metropolitan Police deployed cameras at Notting Hill Carnival, the first of 10 test deployments conducted between 2016 and 2019. Independent researchers from Essex University, Pete Fussey and Daragh Murray, were invited to observe this work; their report found it “highly possible” that the force’s use of facial recognition “would be held unlawful if challenged before the courts”.

The following year, it was a different force that found itself facing such a court challenge. In 2020, civil liberties campaigner Ed Bridges won a challenge to South Wales Police’s use of facial recognition, when the UK’s Court of Appeal ruled the force had breached privacy, data protection and equalities laws. (Bridges was joined in the claim by human rights group Liberty which, as the parent organisation of Liberty Investigates, now pays my wages.)

When I joined Liberty Investigates in January 2023, I started to look into facial recognition. After the Bridges judgment, forces paused live deployments for nearly two years. But I soon discovered that it had never really gone away.

Retrospective searches using the police national database had continued. Initially, the number of searches had grown slowly but steadily, from 3,360 in 2014 to nearly 20,000 in 2021. Then, the search algorithm was upgraded, and use exploded. In 2022, the number of searches rose to 85,000. There was, however, a crucial problem.

Custody images, as the name suggests, are taken after suspects are arrested. In 2012, a judge ruled police were unlawfully keeping photos of suspects who were released without charge. In 2021, the third biometrics commissioner, Fraser Sampson, found police forces were continuing to “retain the vast majority of their custody images indefinitely, regardless of whether the individual has been convicted of an offence”. Two years later, Sampson told me the problem was ongoing. “It really isn’t good enough,” he said.

With the arrival of facial recognition, having a photo on the police national database created a heightened risk of being flagged as a criminal suspect. That is exactly what happened to Choudhury, who says his custody photo was taken in 2021 after he was attacked on a night out then mistakenly identified as a suspect. He was released without charge, but his photo stayed on the system and, several years later, popped up as a match for the Milton Keynes burglary.

My own reporting has revealed numerous instances of police forces pushing ethical and legal boundaries. I’ve discovered police conducting secret searches of the UK’s passport and immigration databases, police staff accessing a facial recognition search engine described as “invasive and dangerous” by MPs, and live facial recognition watchlists including hundreds of children as young as 12.

The question of whether the technology discriminates—producing higher rates of false positives for women, children and people of colour—has been the core of the public debate about its use. In the early years, the answer was an incontrovertible yes. As the accuracy of the technology has improved, the issue has become less clearcut. The National Physical Laboratory, reviewing the Met Police live facial recognition system in 2023, found “a substantial improvement” in accuracy, a finding the force cited as evidence that its technology was free of bias. However, researcher Pete Fussey publicly challenged that assertion, saying there was not enough data to support such a conclusion. “Few, if any, in the scientific community would say the evidence is sufficient to support these claims,” he said.

Today, the most pertinent questions relate not to whether the technology is biased, but to whether it is being used in a biased way. Whether used live or retrospectively, facial recognition systems allow the user to set “thresholds” for search results—essentially the level of confidence required for an image to be suggested as a match. The lower the threshold, the more potential matches. But, as the threshold goes down, bias creeps in. Tests have repeatedly shown women and people of colour are more likely to be incorrectly identified as matches when lower thresholds are used.

In December, I discovered that forces knew about this problem, but lobbied to search the police national database with those settings anyway because they wanted to generate more potential matches. They argued that the bias posed little risk, because human operators would make final decisions about whether to make an arrest—but that did nothing to prevent Choudhury from spending 10 hours in custody for a crime he did not commit. As his lawyer, Iain Gould, told me: “If the police want to take advantage of facial recognition to locate suspects, massively widening the net of their investigative abilities, they must ensure that artificial intelligence is not substituted for human intelligence and due diligence.”

Choudhury is not the only person to take legal action after being misidentified. In November, South Wales Police paid damages to a black man who was wrongly arrested after appearing 32nd in a list of suggested facial recognition matches for a suspect wanted for stalking offences. South Wales Police said it “apologised that the service provided was not acceptable in this case”.

Two separate solicitors told me they are working on other claims of wrongful arrest based on facial recognition matches. These, of course, are cases that have made their way to lawyers. How many people may have been incorrectly flagged by facial recognition, then questioned or arrested—and never told how they were identified as a suspect?

The Home Office points out that a new national facial recognition system has been procured and says it is confident bias will no longer be an issue. Unfortunately, bias is not the only way in which people can be wrongly identified by facial recognition. Staffordshire Police told me it has used composite images of suspects—an evolution of the traditional police sketch—to search for facial recognition matches. One of the academics who developed the system says research is still ongoing to determine if this can be made to work. At the moment “they don’t work very well,” he tells me. “The recognition rates are very poor.”

On a Tuesday morning in January, Court 73 at the Royal Courts of Justice was full to witness the latest High Court challenge to the police’s use of live facial recognition. The case was brought by Shaun Thompson, an anti-knife-crime activist, and Silkie Carlo, director of civil liberties campaign group Big Brother Watch, against the Met Police. Officers had stopped Thompson, who is black, and questioned him for more than 20 minutes, forcing him to show identification.

For Thompson and Carlo, lawyer Dan Squires argued that there are no meaningful limits on how the police can use facial recognition, and that the number of deployments had grown “exponentially”. Police guidelines identify “permitted locations” where facial recognition cameras can be used, but Squires argued these included most of the public spaces in London. Without limits, he said, it would be “impossible” for anyone to travel across the city without being captured by facial recognition. The Met’s lawyer, Anya Proops, countered: “This claim raises a full-scale attack on the legality of my client’s use of [live facial recognition] to police the capital.”

The next morning, Thompson arrived wearing a black hoodie emblazoned with the slogan “Stop and Search on Steroids”. As the hearing began, the discussion touched briefly on the issue of people being misidentified. Proops described this to the court as “a vanishingly small problem”; I wondered if Thompson would agree. There was a discussion about who exactly was being placed on police watchlists. At one point, proceedings turned to whether facial recognition could be integrated into London’s CCTV network. The hearing concluded and the judges left to deliver their verdict at a later date. It remains unclear whether the Met—or the courts—will agree to any stricter limits being placed on use of the technology.

Facial recognition has been described as a “game-changing tool” by key figures within the police, including the Met commissioner, Mark Rowley. Sarah Jones, the policing minister, has called facial recognition the “biggest breakthrough for catching criminals since DNA matching”. Yet it’s unclear to what extent that is true of the way in which the technology is currently being used.

In Croydon’s Fairfield ward, where fixed facial recognition cameras have been installed, the Met Police has reported a 12 per cent reduction in some crimes. Closer analysis reveals that crime figures actually increased in one of the immediate areas where fixed cameras operate, and in three of the six wards neighbouring Fairfield. None of this disproves that facial recognition has a meaningful impact on crime, but what is the risk that crime simply takes place elsewhere or at another time?

Then there is the question of who is currently being caught. Police forces publicise arrests made during facial recognition deployments, including suspects wanted for serious crimes such as assault, kidnap and sexual offences. But my analysis of facial recognition arrests, based on a combination of those publicly announced by police and data obtained via freedom of information requests, suggests theft is the most common offence for which suspects are identified using facial recognition. Drug offences and criminal damage are among the other most commonly cited reasons, while suspects have also been arrested for driving and immigration offences.

It would reverse the presumption of innocence and subject every citizen to repeated checks

It is also unclear how cost-effective the technology is. The Met has previously told me it doesn’t monitor the cost of live facial recognition operations, making it impossible to compare with traditional policing operations. I wondered if any other forces had attempted to make this calculation, so I submitted public records requests to six others that were using live facial recognition, plus the Home Office and NPCC, asking for assessments of the technology’s cost effectiveness. Without fail, they told me that work had not been done. However, in her witness statement to the court, Lindsey Chiswick said the cost for each arrest using traditional methods was 53.9 per cent higher than for those using facial recognition, based on the number of officer hours involved. When I asked the Met how it had arrived at this figure, a spokesperson confirmed the calculation did not take into account the cost of the technology or the offences for which arrests were being made.

Facial recognition will find more suspects the more widely and regularly it is used. Fixed cameras could in theory be deployed across every public space and checked against a watchlist of everyone wanted for any offence—from violent crimes to unpaid parking tickets and expired visas. If such a system worked perfectly, it would usher in an age of total law enforcement. It would also reverse the presumption of innocence and subject every citizen to repeated checks for the absence of suspected guilt.

Live facial recognition is being piloted by the British Transport Police at train stations in London, and cameras are increasingly being used to scan crowds attending football matches. In west London, Hammersmith and Fulham Council recently approved plans to use facial recognition across parts of its CCTV network. This capability would allow anybody to be tracked and monitored, any suspicious behaviour flagged for investigation. If this sounds like the stuff peddled by science fiction films and tin foil hat wearers, it is—and it is also already happening. In December, I revealed the existence of a police AI tool that analyses data from licence plate readers to track drivers across the UK and identify people making “suspicious” journeys. The Home Office says facial recognition is a “crucial tool” for police with established laws on its use, adding: “Officers are given guidance and training to minimise errors.”

On a Tuesday afternoon in March, I arrived at Hammersmith station to observe a Met Police live facial recognition operation. Around 10 uniformed officers stood around a van equipped with cameras. Zoë Garbett, a Green Party London Assembly member, was questioning two police representatives about the planned rollout of handheld facial recognition devices, which only became public after Garbett’s pointed questioning of London mayor Sadiq Khan. “I just find it really concerning,” she said. “The national government literally just closed a consultation on the legal framework. At what point are people going to know that they could have their face scanned by a device in a police officer’s pocket?”

We milled around in the vicinity of the cameras for a while. A police car pulled up, sirens blaring and lights flashing, blocking traffic so a convoy of marked and unmarked police cars could pass through. Garbett noticed something and turned to the officers next to us with concern. “Do you know why they’ve got their faces covered?” she asked, referring to officers inside one of the cars. There was a brief pause before one of the men in uniform quipped: “Maybe they’re trying to dodge the cameras.”

Late last year, when I saw a police facial recognition van in the street between my office and the underground station, I lamented not carrying a scarf or mask and pulled my T-shirt up over my face. I’d been writing about facial recognition for more than two years, but it was the first time I’d seen the technology in operation. Part of me felt childish for trying to avoid the cameras, but I also felt compelled to resist a forced identity check. I attracted some bemused glances as I awkwardly hurried past the assembled police officers, but nobody stopped me.

This time, as I stood next to the cameras, I made no attempt to hide my face. Partly, I was afraid of looking ridiculous while there in a professional capacity. But it also felt like a pointless gesture. This was not the first and wouldn’t be the last time I saw a police facial recognition camera, and the technology can now identify masked faces anyway. I could think of no good reason why I would be on a police watchlist. In other words, I had nothing to hide. So why did it feel like I had something to fear?